Police raid in Calverton seizes heroin, cocaine, cannabis, cash and arrests two suspects on suspicion of drug supply after executing a warrant.

Security researchers have found that thousands of publicly exposed Google Cloud API keys can now be abused to access sensitive Gemini artificial intelligence (AI) services, creating a significant security risk for organizations and developers.

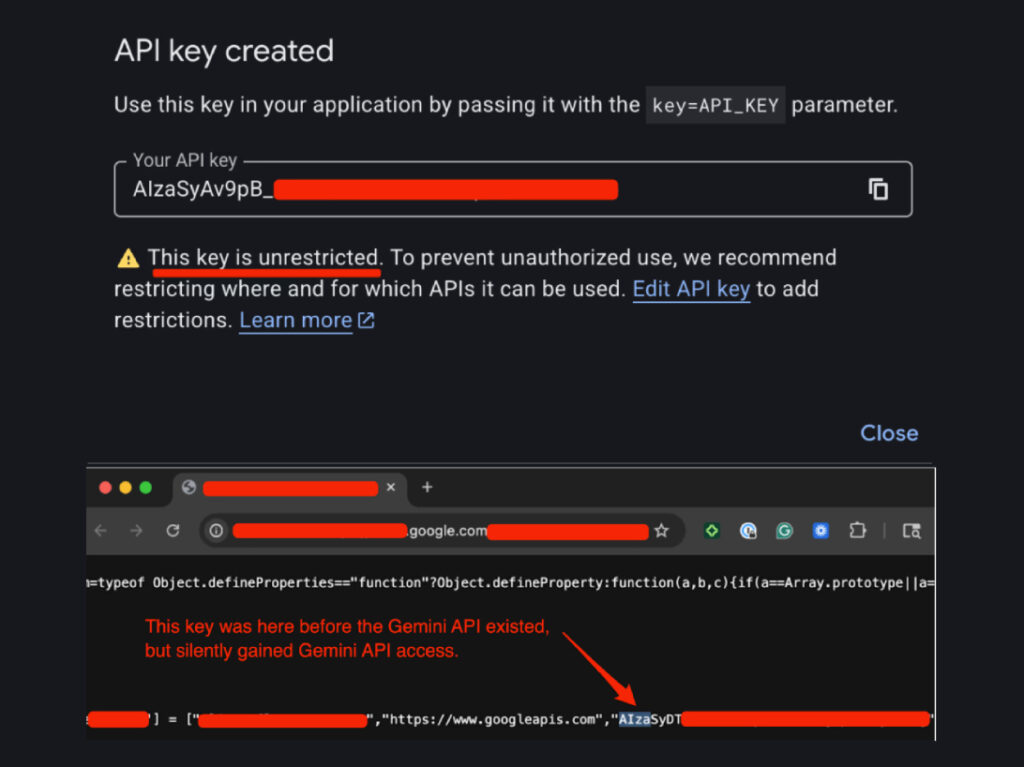

Traditionally, Google Cloud API keys identified by the “AIza” prefix were considered non-sensitive and used in client-side code for services like Google Maps, Firebase or other public features. However, after the Generative Language API (Gemini) was enabled on many projects, these same keys have quietly gained the ability to authenticate to Gemini AI endpoints without warning, despite not being intended for that purpose.

The issue was uncovered by security firm Truffle Security, which found nearly 2,863 live API keys embedded in public website code that now provide access to the Gemini API, potentially exposing private uploaded files, cached content and other data.

Attackers who scrape these keys from public pages or repositories could exploit them not only for access to private AI-related data but also to generate significant billing charges and rack up unexpected costs on victims’ accounts. One user online reported a compromised key resulting in over $82,000 in charges in 48 hours after it was abused to make Gemini API calls.

The problem arises because Google Cloud defaults new API keys to “Unrestricted”, meaning they can access all enabled APIs if services like Gemini are activated in the same project. Many developers never changed these settings because earlier guidance explicitly stated that API keys were meant to be safe for client-side use and not treated as secret credentials.

After being notified about the findings, Google acknowledged the issue and said it has implemented measures to detect and block leaked API keys that attempt to access Gemini and is adjusting default key scopes to limit access. However, experts warn that organizations should immediately audit their API keys, rotate any that are publicly exposed, and verify whether AI-related APIs are enabled across their cloud projects to prevent unauthorized access.

Cybersecurity professionals emphasise that this risk highlights the importance of continuous API security monitoring and least-privilege key configuration, especially as cloud platforms evolve and introduce new services that can inadvertently expand permission scopes for existing credentials.

Discover additional reports, market trends, crime analysis and Harm Reduction articles on DarkDotWeb to stay informed about the latest dark web operations.